Nvidia Soars to New Heights Amidst AI Turbulence

- Nvidia’s record sales and booming cash flow have sparked both optimism and concern among investors and critics alike.

- As the tech giant continues to dominate the AI hardware market, worries about AI’s destabilizing impact on society continue to grow.

- The disconnect between Nvidia’s financial success and the mounting AI fears has raised questions about the future of the tech industry and its role in shaping the world.

Unpacking the Paradox of Nvidia’s Success

NVIDIA—Nvidia, the leader in graphics processing units (GPUs) and a key player in the development of artificial intelligence (AI), has just released its latest financial report, revealing record-breaking sales and an unprecedented surge in cash flow. This news comes as a significant boost to the company’s stock and a testament to its dominant position in the tech industry. However, this success story is juxtaposed with growing concerns about the impact of AI on society, sparking debates about the ethics, safety, and long-term implications of this rapidly evolving technology.

The juxtaposition of Nvidia’s financial triumph with the mounting fears about AI’s influence is not merely coincidental. It reflects a broader paradox within the tech industry, where innovation and profit often precede comprehensive understanding and regulation of the technologies being developed. As AI becomes increasingly integrated into various aspects of life, from healthcare and finance to education and governance, the need for a nuanced discussion about its benefits and risks has become more pressing than ever.

At the heart of the debate lies the question of whether the tech industry, and companies like Nvidia, are adequately addressing the ethical and societal implications of their innovations. While financial success is a critical metric for any company, it is equally important to consider the long-term consequences of technological advancements on human society and the planet. As we delve deeper into the world of AI and its applications, it becomes clear that the path forward requires a balanced approach, one that harnesses the potential of technology while mitigating its risks.

The Rise of Nvidia: A Story of Innovation and Dominance

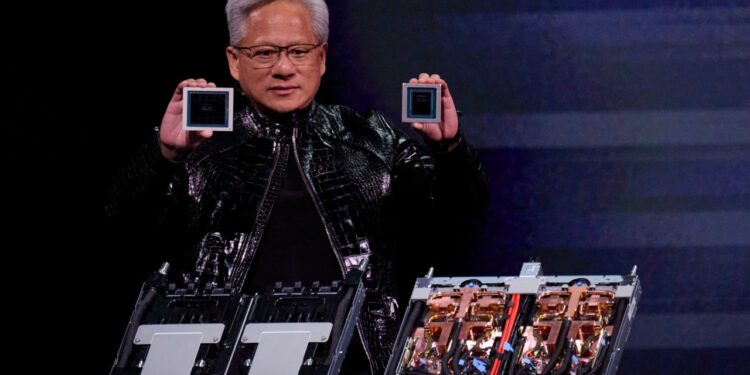

Nvidia’s journey to the top of the tech industry is a story of innovative spirit, strategic vision, and relentless pursuit of excellence. Founded in 1993 by Jensen Huang, Chris Malachowsky, and Curtis Priem, the company started as a specialist in graphics cards, aiming to bring high-quality graphics to the masses. Over the years, Nvidia has not only revolutionized the field of computer graphics but has also become a pivotal player in the development of AI, with its GPUs serving as the backbone for many AI systems.

The company’s success in the AI sector can be attributed to its early recognition of the technology’s potential and its proactive approach to developing hardware and software solutions tailored to AI needs. Nvidia’s Deep Learning Super Sampling (DLSS) technology, for example, has been instrumental in enhancing the performance of AI models, allowing for faster and more accurate processing of complex datasets. Moreover, the company’s commitment to research and development, as evidenced by its substantial investments in AI research initiatives and its collaboration with academic and industrial partners, has further cemented its position as a leader in the AI hardware market.

However, Nvidia’s dominance in the AI hardware sector also raises questions about the concentration of power and influence within the tech industry. The reliance of many AI startups and researchers on Nvidia’s hardware and software tools underscores the company’s significant market share and its potential to shape the direction of AI development. While this concentration can drive innovation and efficiency, it also presents challenges related to competition, accessibility, and the diversification of ideas, which are essential for the healthy development of any technological field.

The balance between innovation and competition is crucial for the tech industry, as it fosters an environment where different perspectives and solutions can emerge, ultimately leading to more robust and versatile technologies. In the context of AI, this balance is particularly important, given the technology’s far-reaching implications for society. As such, the role of regulatory bodies and the need for open standards and accessible technologies become more pronounced, ensuring that the benefits of AI are equitably distributed and its risks are mitigated through collective effort and oversight.

Furthermore, Nvidia’s expansion into the data center market with its AI-centric hardware and software solutions has been a significant factor in its recent success. The company’s data center business has seen substantial growth, driven by the increasing demand for AI computing power from cloud service providers, enterprises, and startups. This shift towards data center and cloud computing not only reflects the evolving needs of the tech industry but also highlights the strategic importance of AI in modern computing infrastructures.

In conclusion, Nvidia’s rise to dominance in the tech industry, particularly in the AI sector, is a testament to the company’s innovative prowess and its ability to adapt to changing technological landscapes. However, this success also underscores the need for a broader discussion about the implications of AI and the responsibilities that come with technological leadership. As Nvidia and other tech giants continue to push the boundaries of what is possible with AI, they must also engage in a dialogue about the ethical, social, and environmental impacts of their innovations, ensuring that the benefits of technology are shared by all and that its risks are managed through collective responsibility and foresight.

The Ethical Dilemmas of AI: Navigating the Future

The development and deployment of AI systems present a myriad of ethical dilemmas, from issues of bias and fairness in decision-making algorithms to concerns about privacy, security, and the potential for job displacement. As AI becomes increasingly intertwined with various aspects of life, the need for ethical guidelines and regulatory frameworks that can address these challenges has become more pressing. However, the complexity of AI systems and the rapid pace of innovation in the field often make it difficult to establish clear-cut rules and standards for ethical AI development and use.

One of the most significant ethical challenges facing AI developers is the problem of bias in AI systems. Since AI models are trained on data that reflects the world as it is, including all its biases and prejudices, there is a risk that these models will replicate and even amplify existing social inequalities. For instance, facial recognition systems have been shown to have higher error rates for people with darker skin tones, and natural language processing models have been found to reflect gender biases present in the data used to train them. Addressing these biases requires a concerted effort to diversify training datasets, implement fairness metrics, and engage in ongoing auditing and testing to ensure that AI systems are fair and unbiased.

Another critical ethical consideration in AI development is privacy. As AI systems increasingly rely on personal data to function effectively, ensuring that this data is collected, stored, and used responsibly becomes paramount. This involves not only complying with existing privacy regulations but also adopting transparent practices that inform users about how their data is being used and providing them with meaningful control over their personal information. The integration of privacy-enhancing technologies, such as differential privacy and federated learning, into AI systems can also help mitigate privacy risks by allowing for the analysis of data without compromising individual privacy.

The potential for AI to displace jobs is another significant concern, as automation and AI-driven processes become more prevalent in various sectors. While AI has the potential to create new job opportunities and enhance productivity, it also poses a risk to employment, particularly for roles that are susceptible to automation. Addressing this challenge requires a multifaceted approach, including investing in education and retraining programs that can equip workers with the skills needed to thrive in an AI-driven economy, as well as considering social safety nets and policies that can support workers who are displaced by automation.

Lastly, the development of AI raises fundamental questions about accountability and responsibility. As AI systems make decisions that can have significant impacts on individuals and society, it becomes essential to establish clear lines of accountability and to ensure that there are mechanisms in place for addressing errors, biases, or other issues that may arise. This involves not only developing more transparent and explainable AI models but also creating legal and regulatory frameworks that can hold developers and deployers of AI systems accountable for their actions.

In navigating the future of AI, it is crucial to engage in a broad and inclusive dialogue about these ethical dilemmas. This involves not only tech companies and regulatory bodies but also civil society, academia, and the general public. By working together, we can develop ethical guidelines, regulatory frameworks, and technological solutions that can mitigate the risks associated with AI and ensure that its benefits are equitably distributed and its development is guided by human values and principles of social responsibility.

Regulating AI: The Path Forward

The regulation of AI is a complex and multifaceted challenge that requires a comprehensive and nuanced approach. Given the rapid evolution of AI technologies and their diverse applications across various sectors, establishing effective regulatory frameworks that can keep pace with innovation while protecting societal interests is crucial. This involves a collaborative effort between governments, industry stakeholders, civil society, and the academic community to develop and implement regulations that are both effective and adaptable to the changing landscape of AI development and deployment.

One of the key challenges in regulating AI is balancing the need to foster innovation with the necessity to mitigate risks. Overly restrictive regulations could stifle the development of AI, limiting its potential benefits, while inadequate oversight could lead to significant harm. Therefore, regulatory approaches must be carefully calibrated to address specific risks and challenges associated with AI, such as ensuring transparency and explainability in AI decision-making, protecting privacy, and preventing discrimination.

Existing regulatory frameworks, such as the General Data Protection Regulation (GDPR) in the European Union, provide a foundation for addressing some of the privacy and data protection concerns related to AI. However, these frameworks may need to be expanded or modified to specifically address the unique challenges posed by AI, including its potential for widespread societal impact and the complexities of attributing responsibility in AI-driven decision-making.

Moreover, the development of international standards and cooperation on AI governance is essential, given the global nature of AI development and deployment. This could involve the establishment of international guidelines for AI development, shared standards for AI safety and security, and collaborative mechanisms for addressing the global implications of AI. The participation of multiple stakeholders, including governments, industry leaders, and civil society organizations, in these efforts is vital for ensuring that the governance of AI reflects a broad range of perspectives and priorities.

In addition to regulatory measures, industry-led initiatives and voluntary standards can play a significant role in promoting responsible AI development and use. Companies like Nvidia, with their significant influence in the AI ecosystem, have a particular responsibility to lead by example, implementing robust ethical guidelines, investing in research on AI safety and fairness, and advocating for regulatory frameworks that support the beneficial development of AI.

Ultimately, the path forward for AI regulation involves a dynamic interplay between technological innovation, societal needs, and regulatory oversight. By fostering a culture of responsibility, transparency, and continuous learning, and by engaging in a global dialogue about the future of AI, we can navigate the complex landscape of AI development and ensure that its benefits are realized while minimizing its risks.

Conclusion: The Future of AI and Nvidia’s Role

In conclusion, the story of Nvidia’s success amidst growing AI fears is a complex tapestry of innovation, ethics, and societal implications. As the tech industry continues to push the boundaries of what is possible with AI, it is imperative that companies like Nvidia, regulatory bodies, and society at large engage in a comprehensive and ongoing dialogue about the future of AI and its impact on the world. This dialogue must address the ethical challenges, regulatory needs, and societal implications of AI development and deployment, with the aim of ensuring that AI is developed and used in ways that are beneficial, safe, and fair for all.

Nvidia, as a leader in the AI hardware market, has a significant role to play in this dialogue. The company’s commitment to innovation and its proactive approach to addressing the ethical and societal implications of AI are crucial steps towards a future where AI enhances human life without exacerbating existing social and environmental challenges. By continuing to invest in AI research, by advocating for responsible AI development and use, and by collaborating with stakeholders across the globe, Nvidia can help shape a future for AI that is both promising and responsible.

Furthermore, the future of AI will be shaped by a myriad of factors, including technological advancements, regulatory frameworks, societal values, and global cooperation. As we move forward, it is essential to recognize that AI is not just a technological phenomenon but a societal one, with the potential to affect almost every aspect of human life. Therefore, the development and deployment of AI must be guided by a deep understanding of its implications and a commitment to ensuring that its benefits are equitably distributed and its risks are mitigated through collective effort and foresight.

In the end, the success of Nvidia and the future of AI are intertwined with the broader narrative of technological progress and human society. As we embark on this journey into the uncharted territories of AI, we must do so with a sense of responsibility, a commitment to ethical considerations, and a vision for a future where technology serves humanity, enhancing our capabilities, our well-being, and our planet.

The Role of Society in Shaping AI’s Future

The role of society in shaping the future of AI is multifaceted and indispensable. As AI becomes increasingly integrated into various aspects of life, from healthcare and education to employment and entertainment, the need for societal engagement and oversight has become more critical than ever. Society, through its diverse perspectives and values, has the power to influence the direction of AI development and ensure that AI technologies are aligned with human needs, values, and aspirations.

One of the key ways society can shape AI’s future is through education and awareness. By educating the public about the capabilities, limitations, and implications of AI, we can foster a more informed and engaged citizenry, capable of participating in the ongoing dialogue about AI’s role in society. This education should not be limited to the technical aspects of AI but should also encompass its ethical, social, and cultural dimensions, preparing individuals to navigate the complex landscape of AI-driven change.

Societal engagement in AI governance is also essential, as it can provide the moral and ethical framework necessary for guiding AI development and deployment. This involves not only participating in public debates and consultations about AI policy and regulation but also advocating for AI systems that reflect societal values such as fairness, transparency, and accountability. Civil society organizations, community groups, and individual advocates play a vital role in this process, ensuring that the voices of diverse stakeholders are heard and that AI development is responsive to the needs and concerns of all segments of society.

Finally, the role of society in shaping AI’s future is also reflected in its capacity to demand accountability and responsibility from AI developers and deployers. By setting high standards for AI ethics, safety, and security, and by holding companies and governments accountable for the impact of AI systems, society can ensure that AI is developed and used in ways that are beneficial, fair, and sustainable for all. This involves a continuous dialogue between stakeholders, ongoing evaluation of AI’s societal implications, and a commitment to adapting and evolving our approaches to AI governance as the technology and its impacts continue to evolve.

The Intersection of AI and Human Values

The intersection of AI and human values is a critical area of exploration, as AI systems increasingly influence various aspects of human life and society. At the heart of this intersection are questions about how AI can be developed and used in ways that align with and promote human values such as dignity, autonomy, privacy, and fairness. This involves not only ensuring that AI systems are designed to respect and protect these values but also addressing the broader societal and cultural implications of AI-driven change.

One of the most significant challenges in aligning AI with human values is the need to define and operationalize these values in the context of AI development. This requires a nuanced understanding of what human values mean in the age of AI and how they can be translated into technical specifications and design principles for AI systems. It also involves recognizing that human values are diverse and can vary across different cultures and societies, necessitating an approach to AI development that is sensitive to these differences and adaptable to different cultural contexts.

The development of value-aligned AI also depends on the creation of AI systems that are transparent, explainable, and accountable. This means developing AI models that can provide insights into their decision-making processes, allowing for the identification and mitigation of biases, and ensuring that AI systems are designed to be auditable and subject to human oversight. Furthermore, it involves establishing regulatory frameworks and industry standards that promote transparency, accountability, and the protection of human rights in AI development and deployment.

In addition to these technical and regulatory efforts, the intersection of AI and human values highlights the importance of interdisciplinary research and collaboration. Bringing together AI researchers, social scientists, philosophers, and stakeholders from various sectors can facilitate a deeper understanding of the complex interplay between AI and human values, leading to the development of AI systems that are not only technologically advanced but also socially responsible and ethically sound.

Lastly, the intersection of AI and human values underscores the need for a global and inclusive dialogue about the future of AI. This dialogue must engage diverse perspectives and voices, recognizing that the development and deployment of AI are global phenomena with far-reaching implications for humanity. By fostering such a dialogue and working together to align AI with human values, we can ensure that AI serves as a force for good, enhancing human well-being, dignity, and autonomy, rather than undermining them.