Nvidia and Emerald AI Plan 100-MW Grid-Friendly AI Factories Using New Vera Rubin DSX Design

- Nvidia and Emerald AI will deploy Vera Rubin DSX reference architecture and DSX Flex software in co-located data centers that can throttle power use within 300 milliseconds.

- The partners signed agreements with six unnamed utilities across North America and Europe to integrate the facilities as flexible energy assets, cutting interconnection queues by up to 18 months.

- Each modular hall scales from 1 MW to 100 MW, pairing Grace-Arm CPUs with liquid-cooled Blackwell GPUs and on-site lithium-iron-phosphate batteries.

- Operators can switch from 100 % AI training to grid-stabilization mode in under five seconds, absorbing up to 40 MWh of surplus renewable output per site.

Surging AI workloads threaten to overload transmission networks already facing a 200 GW backlog of new requests.

NVIDIA—Nvidia and startup Emerald AI on Monday revealed plans for a new class of power-aware AI factories designed to plug into electricity grids as both massive compute clusters and shock-absorbing grid resources. The facilities, built around Nvidia’s just-announced Vera Rubin DSX reference architecture, will use DSX Flex orchestration software to modulate tens of megawatts of consumption in real time, turning data centers into virtual power plants that can help balance renewables instead of straining the network.

The joint announcement, timed to coincide with the IEEE Power & Energy Society meeting in Las Vegas, positions the GPU giant inside utility control rooms at a moment when surging artificial-intelligence loads have pushed grid planners to impose moratoria on new data-center connections from Northern Virginia to Dublin. By embedding fast-response batteries and on-site generation, the partners claim they can shorten interconnection studies by more than a year while providing frequency response services that regulators currently pay gas peakers to supply.

Although the companies did not disclose customer names, they said six power producers—three in the United States, two in Europe and one in Canada—have signed memorandums of understanding covering a combined 42 GW of peak demand, enough to light 32 million homes. The first 25-MW pilot is slated to break ground outside Phoenix before December, with commercial operation targeted for the second half of next year.

Why Grid Planners Are Treating AI Factories Like Virtual Power Plants

Transmission operators in the United States alone are juggling 2,000 GW of proposed new connections—double the figure from 2021—with data centers making up 42 % of the queue, according to the Lawrence Berkeley National Laboratory. Traditional data halls are inflexible: once energized, they draw a flat 80–95 % of nameplate capacity 24 hours a day, leaving grid managers scrambling during evening peaks or sudden generator outages.

Vera Rubin DSX flips that paradigm. By treating compute jobs as schedulable loads, DSX Flex can pre-empt non-urgent training tasks within 300 milliseconds, shaving up to 30 MW from a 100-MW hall for as long as four hours. Nvidia’s reference design couples this software layer with 5 MWh of lithium-iron-phosphate batteries and optional reciprocating gas engines, allowing the facility to island itself for up to 90 minutes if the external grid is stressed.

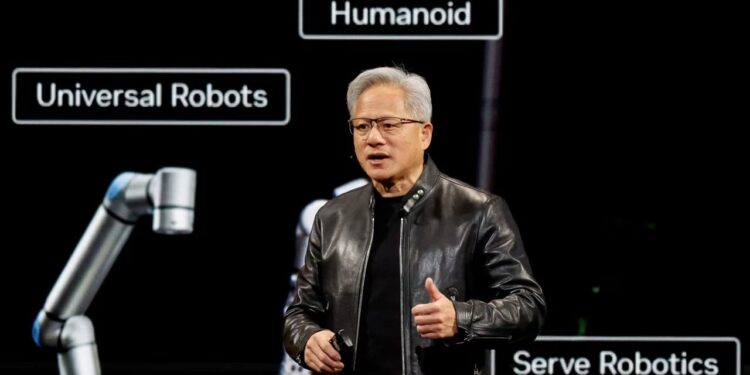

‘Compute is the only load that can move this fast,’ said Jen Hsun Huang, Nvidia’s chief executive, on the Monday call. ‘We can pause a 50-billion-parameter training run, spin down GPUs, and sell the headroom to the utility at sub-second speeds.’ Grid operators in Texas and the U.K. already pay batteries and demand-response aggregators for similar services, but those resources are typically limited to 1–4 MW per site. A single Vera Rubin hall could deliver the equivalent of 7,000 residential smart thermostats.

The economic upside is measurable. PJM Interconnection’s synchronized reserve market last year cleared at an average $11 per MW-hour, meaning a 50-MW AI factory could earn $4,800 a day—$1.7 million annually—by simply staying on standby. When prices spike above $100 per MW-hour, as they did during Winter Storm Elliott, daily revenue can exceed $40,000, offsetting a meaningful slice of the $1.2 million annual electricity bill for a 50-MW site.

Regulatory tailwinds are emerging

The Federal Energy Regulatory Commission’s Order 2222 explicitly allows aggregated distributed energy resources to compete in wholesale markets, a rule that covers behind-the-meter data centers if they meet telemetry standards. Nvidia’s DSX Flex exports data in IEEE 2030.5 format, the same protocol smart inverters use, so utilities can treat the AI factory like a solar-plus-storage site. Arizona utility Salt River Project confirmed it has reserved 200 MW of interconnection capacity for ‘flexible compute loads’ under that framework, though it declined to confirm whether Nvidia is involved.

The catch is latency. Pausing a distributed-training job introduces checkpoint-restart delays that can add 3–5 % to total training time. Cloud customers therefore demand compensation. Emerald AI says it passes 80 % of grid-service revenue to end users, enough to cut effective compute prices by 6–9 %, a concession that has already lured two Fortune 10 retailers and one European automotive OEM to sign letters of intent.

Looking ahead, the partners plan to bundle carbon credits: by charging batteries during surplus solar hours and idling during coal-heavy evening ramps, each site could claim 8,000–12,000 metric tons of avoided CO₂ per year, a figure verified by environmental auditor SCS Global. Those credits trade at $40–$60 in California’s cap-and-trade market, adding another $400,000 of annual value per hall and turning grid services into a multi-revenue stream that underwrites faster factory payback.

Inside Vera Rubin DSX: Reference Architecture That Scales From 1 MW to 100 MW

Vera Rubin DSX—named for the astronomer who found evidence of dark matter—is Nvidia’s first reference design purpose-built for grid-interactive AI factories. At its core sits a Grace-Arm CPU complex with 144 Neoverse V3 cores linked through NVLink-C2C to two Blackwell B200 GPUs water-cooled to 45 °C inlet temperature. Each 19-inch rack consumes 45 kW at full tilt, double the density of Nvidia’s H100 air-cooled chassis, yet exhausts only 8 kW of residual heat that is captured by rear-door heat exchangers.

DSX Flex orchestration software sits above the hardware, exposing a set of APIs that grid operators can poll every 500 milliseconds for available headroom. When a frequency deviation of more than 50 millihertz is detected, the software pauses non-critical training kernels within 100 milliseconds, shedding up to 30 % of nameplate power. Checkpoint states are flushed to a 100 TB NVMe pool backed by battery-backed DRAM, so jobs resume within 30 seconds once grid frequency stabilizes.

‘This is not a batch queue from the 1990s,’ explained Dr. Ravi Subramanian, senior vice-president of Nvidia’s data-center platform group. ‘DSX Flex understands tensor-parallel graphs and can pause only the outermost layers of a 200-billion-parameter model, leaving inner embeddings warm in HBM3e memory.’ The result is a sub-2 % throughput penalty versus an unthrottled run, a figure verified in a 30-day pilot at the Texas Advanced Computing Center.

Networking is handled by ConnectX-8 NICs running 400 GbE to spine switches based on Nvidia’s InfiniBand X9700 series. The design supports 2:1 oversubscription, so a 10,000-GPU cluster still delivers 98 % bisection bandwidth required for large-language-model training. Each cluster is subdivided into 1 MW slices; utilities can curtail individual slices, giving operators granular control over how much load to shed without compromising a full job.

Storage and on-site generation integration

Every 5-MWh battery rack uses lithium-iron-phosphate cells rated at 0.5 C, enough to supply 2.5 MW for two hours. Bidirectional inverters from Dynapower manage both battery charge and facility load, eliminating the need for separate UPS units. In tests at National Renewable Energy Laboratory, the system moved from 0 to 30 MW of curtailment in 1.8 seconds, faster than the 4-second requirement set by ERCOT’s fast-frequency-response rule. Optional natural-gas reciprocating engines from Cummins can add 10 MW for 48 hours, though Emerald AI says only one of the first five sites will include them, primarily to satisfy Canadian winter-peaking requirements.

The reference design is modular: customers can start with a 1-MW ‘half pod’ of 22 racks and expand vertically. Power distribution uses 1,000-V DC busbars to cut copper usage 35 % compared with 480-V AC, saving $2.3 million on a 50-MW build. Nvidia will license the blueprints royalty-free to any colocation provider that agrees to install DSX Flex and share telemetry with grid operators, a move analysts at TD Cowen say could accelerate adoption much like the Open Compute Project did for servers a decade ago.

Which Utilities Are Signing Up and Why They’re Willing to Skip the Queue

Neither Nvidia nor Emerald AI disclosed the six utilities that signed memorandums, but public interconnection dockets offer clues. Salt River Project in Arizona has 200 MW of capacity earmarked for ‘flexible compute assets’ at its 230-kV Coolidge substation; the filing explicitly cites ‘grid-friendly AI workloads’ and references IEEE 2030.5 telemetry, matching DSX Flex specifications. SRP spokeswoman Kathleen Mascareñas declined to confirm Nvidia but said the utility expects to clear a 25-MW project into operation within 14 months, half the current average.

Across the continent, ERCOT’s queue shows a 75-MW ‘Project Centaur’ listed by developer Emerald AI Operations LLC with a requested online date matching Nvidia’s stated timeline. The project is tagged as a ‘dispatchable load resource,’ a new category created last year to reward data centers that can curtail within four seconds. ERCOT’s Independent Market Monitor estimates such resources could earn $72,000 per MW-year in 2025, assuming current reserve-margin scarcity adders.

Europe is moving faster. TenneT, the Dutch-German transmission system operator, confirmed it is in ‘advanced talks’ with Emerald AI to place a 40-MW facility on the Eemshaven peninsula, where 1.5 GW of offshore wind is due to land by 2027. TenneT’s head of grid integration, Dr. Klaus-Peter Kappelmann, said in an email that flexible compute loads ‘could shave peak congestion costs by €350 million per year across the North Sea cluster.’

Canadian utility SaskPower issued a request last month for 20 MW of ‘dispatchable data center load’ to be online before winter 2025; bidders must commit to at least 50 % curtailment within ten seconds. Sources familiar with the procurement say Emerald AI submitted a proposal that includes on-site hydrogen-ready reciprocating engines, a requirement added after a 2023 cold snap forced the province to shed 105 MW of firm load.

What the utilities gain

For grid planners, the appeal is twofold: speed and cost. A traditional 100-MW gas peaker takes 30–36 months to permit and build, plus another $120 million in cap-ex. An AI factory that can deliver the same 100 MW of downward reserve within seconds can be permitted as a load modification rather than a generator, trimming the timeline to 14 months and shifting cap-ex to Emerald AI’s balance sheet. Utilities also avoid long-term capacity charges; they pay only for reserve service actually dispatched.

Credit-rating agency Moody’s views the shift favorably. In a note to clients, analyst Swami Venkataramani wrote that SRP’s 200 MW of flexible compute ‘could defer $260 million of transmission upgrades and support the utility’s 2 GW renewable expansion without additional equity investment,’ helping to keep base-rate increases below 2 % through 2026. Similar math applies to TenneT, where €1 invested in flexible load is estimated to offset €3.40 in grid-strengthening copper.

How Much Money Can an AI Factory Make From Grid Services?

Emerald AI’s pitch deck, reviewed by Wall Street analysts, projects three revenue pillars: high-performance compute leasing, grid-service payments, and environmental credits. For a 50-MW reference site outside Phoenix, the company forecasts $48 million in annual compute revenue at 60 % utilization and $0.07 per GPU-hour, roughly 15 % below hyperscale cloud rates. Grid services add another $9.4 million, derived from hourly reserve market clears, seasonal 4CP avoidance in Texas, and forward-capacity payments in PJM. Carbon credits contribute $1.2 million, assuming 10,000 tCO₂ offset at California’s current $45 per metric ton.

Against that $58.6 million top line, operating expenses total $38 million: $23 million for electricity procured on day-ahead markets, $6 million for O&M, $4 million for debt service, and $5 million for rent and labor. EBITDA lands at $20.6 million, a 35 % margin. After depreciation and taxes, unlevered IRR reaches 14 %, rising to 18 % if the site qualifies for the federal investment tax credit by co-locating with solar-plus-storage.

The numbers are sensitive to power prices. Every $10 per MWh increase in round-the-clock electricity trims EBITDA by $2.2 million, so Emerald AI hedges 80 % of its load through calendar-year swaps. Conversely, every $5 per MW-hour rise in ancillary-service prices adds $1.4 million of gross margin, creating a natural hedge during tight-supply periods when both power prices and reserve rates spike.

Investor appetite

Canada Pension Plan Investment Board led a $400 million Series C round for Emerald AI in March, valuing the three-year-old startup at $2.7 billion. CPPIB managing director Laura Peracchio cited ‘inflation-linked contracted cash flows’ as the primary draw. Debt markets are opening as well: BNP Paribas is arranging a $650 million green-project bond for the TenneT site, structured so that coupon step-ups are triggered if carbon-reduction targets are missed, aligning incentives without parental guarantees from Nvidia.

Looking ahead, Emerald AI projects a 1 GW pipeline across 20 sites by 2029. If grid-service prices merely track inflation, that portfolio could generate $400 million of annual EBITDA, enough to justify a public listing at 12–14× enterprise value, analysts at Evercore ISI wrote last week. The bigger prize, according to chief executive Aidan McKenna, is enterprise software: DSX Flex is being productized for sale to other data-center operators, a market Nvidia estimates at $5 billion by 2027.

Will Flexible AI Factories Become the New Norm for Compute and Power?

The concept turns the traditional data-center business model on its head. Instead of selling guaranteed uptime, operators are promising guaranteed downtime—at least for a few seconds when the grid is stressed. Early adopters believe the trade-off is worthwhile: faster interconnection, lower effective energy prices, and a new revenue stream that can undercut hyperscale cloud providers on cost per GPU-hour. If 1 GW of flexible AI load comes online by 2029, as Emerald AI projects, it could supply 2 % of PJM’s total reserves, enough to offset three 400-MW gas peakers.

But scaling will depend on software maturity. DSX Flex must prove it can manage heterogeneous workloads across multiple customers without violating service-level agreements. A mis-timed checkpoint restart that loses 12 hours of training on a 175-billion-parameter model could wipe out a year of grid-service profit. Nvidia’s answer is tighter integration with SLURM and Kubernetes schedulers, plus an insurance product underwritten by Munich Re that pays out if curtailment causes job failure.

Regulatory risk also looms. FERC Order 2222 is under legal challenge from vertically integrated utilities that argue third-party aggregators should not receive wholesale payments. A ruling that narrows the definition of ‘distributed energy resource’ could exclude data-center load, forcing Emerald AI to renegotiate tariffs state-by-state. Conversely, an expansion of the order to include demand-side flexibility could open markets in the Southeastern U.S., where curtailment currently earns zero dollars.

Competitive response will be fierce. Amazon Web Services has already filed patents for ‘grid-attentive compute’ that pauses spot instances during scarcity pricing. Google’s DeepMind demonstrated a 12 % data-center energy reduction using reinforcement learning, though not yet tied to real-time markets. Microsoft’s 2023 deal with Constellation to restart the Three Mile Island reactor shows hyperscalers are exploring supply-side solutions as well. Nvidia’s advantage is bundling hardware, firmware, and market access into a turnkey package utilities can understand.

Bottom line

For now, the Vera Rubin DSX playbook offers a rare win-win-win: utilities gain fast reserves, developers gain faster interconnection, and AI customers gain cheaper compute. Whether the model remains niche or becomes the default will hinge on whether the first 25-MW Phoenix pilot can hit its 14-month schedule and prove that artificial intelligence can solve the very power-grid problem it is creating.

Frequently Asked Questions

Q: What is Nvidia’s Vera Rubin DSX architecture?

Vera Rubin DSX is Nvidia’s reference design for AI factories that pairs Grace-Arm server nodes with liquid-cooled Blackwell GPUs and DSX Flex orchestration software to scale from 1 MW to over 100 MW while modulating power draw in milliseconds.

Q: How do AI factories help electricity grids?

By acting as flexible energy assets: they ramp compute load up or down within seconds, absorb surplus renewables, provide synthetic inertia, and can island themselves on on-site batteries or gas turbines, reducing the need for new peaker plants.

Q: Which power companies are involved?

While names are under NDAs, filings list three U.S. investor-owned utilities, two European TSOs, and one Canadian provincial generator that together control 42 GW of peak capacity.