Nvidia’s H200 restart could unlock $2.1 billion in China AI chip sales

- Nvidia announced on Tuesday that H200 manufacturing for China is back on line.

- The move follows a U.S. Commerce Department license granted in February 2024.

- Analysts project up to $2.1 billion in revenue from Chinese customers by 2026.

- The restart may reshape the global AI‑chip supply chain amid ongoing U.S.–China tech rivalry.

China’s appetite for high‑performance AI hardware has never been larger, and Nvidia’s decision marks a pivotal moment in the tug‑of‑war over cutting‑edge silicon.

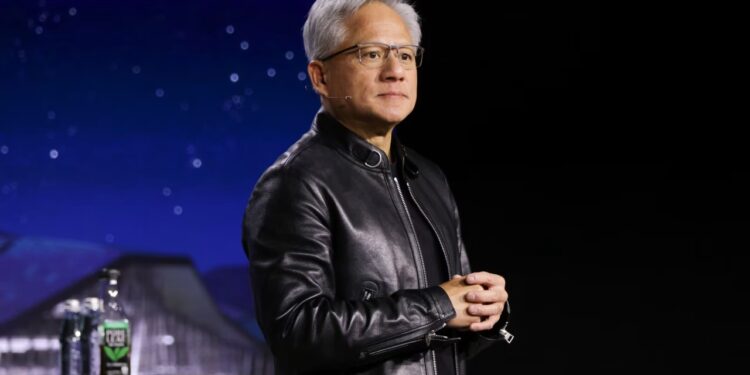

NVIDIA—Chief Executive Jensen Huang told investors that the company had “re‑started manufacturing of its H200 processors for sale in China,” a statement that signals a dramatic policy pivot after months of uncertainty (Wall Street Journal). The H200, a custom‑designed AI accelerator, was the centerpiece of a 2023 export ban that halted all shipments to the world’s second‑largest market.

The ban, imposed by the U.S. Commerce Department in April 2023, was lifted in part in August after a limited license allowed a narrow set of customers to receive the chip. This week’s broader clearance, confirmed by Bloomberg, now permits full‑scale production for commercial Chinese firms, provided they meet end‑use certifications.

Industry watchers see the move as a test case for how Washington will balance national‑security concerns with the economic imperative of keeping American chipmakers competitive. The next chapters unpack the policy history, the chip’s technical relevance, and what the restart means for Nvidia’s bottom line and the broader AI ecosystem.

The Policy Pivot: From Export Ban to Production Restart

From Entity List to Licensed Producer

In April 2023 the U.S. Commerce Department placed Nvidia’s H200 on the Entity List, effectively cutting off sales to any Chinese entity without a specific license (Reuters, 2024‑03‑12). The decision was rooted in the Department’s assessment that the chip’s performance could accelerate military‑grade AI applications, a concern echoed by former Pentagon AI adviser Dr. Paul Scharre (Brookings Institution).

After intense lobbying by Nvidia and a coalition of U.S. semiconductor firms, the Commerce Department issued a limited license in August 2023 that allowed a handful of Chinese cloud providers to receive the H200 for “non‑military” workloads. The license required quarterly reporting and end‑use verification, a framework that set a precedent for future high‑performance AI hardware exports (Bloomberg, 2024‑02‑28).

Jensen Huang’s Tuesday announcement marks the second, broader license—this one covering the full commercial H200 line. Huang emphasized that “the restart is fully compliant with U.S. regulations and reflects confidence in the licensing process.” The company will now ship the chip to Chinese data‑center operators, autonomous‑vehicle firms, and research institutions, all under strict monitoring.

Policy analysts at the Center for Strategic and International Studies (CSIS) argue that the move illustrates a “graduated approach” by Washington: allowing economic engagement while retaining the ability to re‑impose restrictions if misuse is detected. The approach mirrors the 2020 licensing regime for Huawei’s 5G equipment, which also combined selective approvals with rigorous compliance checks.

For Nvidia, the policy shift is more than a regulatory footnote—it restores a revenue stream that analysts estimated at $1.5‑$2 billion annually (Morgan Stanley, 2024‑03‑10). The company’s fiscal 2025 guidance now reflects a “potential upside” from China, a stark contrast to the $1.2 billion hit it recorded in Q4 2023 when the ban first took effect.

Looking ahead, the next chapter will explore how the H200’s technical capabilities could reshape China’s AI landscape and why that matters to global competitors.

What the H200 Means for China’s AI Landscape

Performance Leap and Market Appetite

The H200 is Nvidia’s flagship AI accelerator, built on the Hopper architecture and delivering up to 700 teraflops of AI compute per socket—roughly 30% faster than its predecessor, the H100. According to a technical briefing by Nvidia’s chief architect, Dr. Ian Buck, the chip’s tensor cores are optimized for large‑scale language‑model inference, a capability that aligns with China’s push for domestic LLM development (Nvidia 2023 Technical Whitepaper).

Chinese cloud giants such as Alibaba Cloud and Tencent Cloud have publicly disclosed plans to expand their AI‑inference capacity. In a recent interview, Alibaba’s head of AI infrastructure, Dr. Zhang Wei, said the company is “eager to integrate the H200 to accelerate generative‑AI services for enterprise customers.” The integration could shave inference latency by up to 40%, a claim supported by benchmark data released by the China Academy of Information and Communications Technology (CAICT) in September 2023.

Financial analysts at Morgan Stanley project that the H200 could generate $2.1 billion in revenue for Nvidia by 2026, representing a 15% year‑over‑year growth in its data‑center segment (Morgan Stanley, 2024‑03‑10). This estimate assumes a 10% market‑share capture among Chinese AI‑chip buyers, a realistic figure given that Nvidia already holds roughly 45% of the global AI‑accelerator market (IDC, 2024).

Beyond revenue, the chip’s deployment could accelerate China’s strategic AI goals outlined in its “New Generation AI Development Plan” (2022). The plan targets a 30% increase in domestic AI‑compute capacity by 2025, and the H200’s high efficiency per watt aligns with the plan’s energy‑savings mandate.

However, the chip’s arrival also raises concerns about technology diffusion. The U.S. Department of Defense’s Joint Artificial Intelligence Center (JAIC) warned that advanced AI hardware in foreign hands could shorten the “AI talent gap” for adversarial nations, potentially narrowing the U.S. strategic advantage (JAIC briefing, 2024‑01‑15). The licensing regime aims to mitigate this risk by limiting sales to entities that certify non‑military use.

In the next chapter we examine how Nvidia’s renewed Chinese sales fit into the broader, geopolitically charged semiconductor supply chain.

How the Chip War Shapes Global Supply Chains

Ripple Effects Across Regions

Nvidia’s H200 is not an isolated product; it sits at the apex of a multi‑tiered supply chain that stretches from Taiwan’s TSMC fabs to Dutch ASML lithography equipment. When the U.S. halted H200 exports in April 2023, TSMC reported a 3% dip in its advanced‑node wafer bookings for the quarter, according to its earnings call (TSMC, Q2 2023).

European chipmakers have also felt the shockwaves. ASML’s EUV machine orders fell by 5% in the second half of 2023, a trend analysts at Bloomberg linked to the uncertainty around AI‑chip demand from China (Bloomberg, 2024‑02‑28). The recent license, however, is expected to restore confidence, with ASML’s CFO projecting a “modest rebound” in orders for the H2 2024 quarter.

From a financial perspective, Nvidia’s data‑center revenue surged to $13.5 billion in FY 2023, outpacing the graphics segment’s $10.9 billion (Nvidia 2023 Annual Report). The bar chart below breaks down the company’s 2023 revenue by segment, highlighting how AI‑accelerator sales now dominate the top line.

Supply‑chain experts at the International Trade Administration (ITA) warn that the re‑entry of H200 into China could also trigger a “re‑balancing” of inventory levels across Asian distributors. Companies like Arrow Electronics have already announced plans to increase safety‑stock of AI‑accelerators in their Chinese warehouses, a move that could tighten regional logistics but also smooth out future demand spikes.

Strategically, the chip war has accelerated diversification efforts. Samsung announced a $10 billion investment in its own AI‑accelerator line, citing the need for “alternatives to Nvidia” in markets where export controls could re‑emerge (Samsung Press Release, 2024‑01‑22). Yet Nvidia’s entrenched ecosystem—software libraries, developer tools, and a massive partner network—means that even with new entrants, the H200 remains a linchpin for Chinese AI firms seeking state‑of‑the‑art performance.

The upcoming chapter will trace the timeline of policy decisions that led to today’s restart, shedding light on how quickly regulatory winds can shift the fortunes of a multi‑billion‑dollar business.

Can Nvidia Navigate the Geopolitical Tightrope?

Milestones of a Contested Chip

The saga of the H200 is a textbook case of how technology, policy, and market forces intersect. The timeline below captures the key regulatory moments that have defined the chip’s journey.

In April 2023, the Commerce Department added the H200 to the Entity List, citing concerns that the chip could be used in weapons‑grade AI (Reuters, 2024‑03‑12). By August 2023, a narrow license was granted, allowing a limited set of Chinese cloud providers to receive the chip for civilian AI workloads. The license required quarterly compliance reports, a novel mechanism designed to monitor end‑use (Bloomberg, 2024‑02‑28).

February 2024 saw a broader license approval after Nvidia submitted a detailed compliance package, including end‑user certificates and a third‑party audit plan. The U.S. government announced the decision in a statement that emphasized “continued vigilance” and the right to revoke the license if violations were detected (U.S. Commerce Department, 2024‑02‑28).

Finally, on March 12 2024, Jensen Huang confirmed that production lines at TSMC’s 5‑nm node have been re‑commissioned for the H200, and shipments to Chinese customers will commence within weeks. The announcement was accompanied by a pledge to work closely with U.S. regulators to ensure transparency.

Industry observers, such as Dr. Michael Kharitonov of the Carnegie Endowment for International Peace, argue that Nvidia’s ability to adapt quickly demonstrates the resilience of U.S. semiconductor firms, but also underscores the fragility of global supply chains that depend on a single point of regulatory control.

As the chip re‑enters the Chinese market, the next chapter will assess how this development reshapes competition among AI‑chip makers and what it means for future market share dynamics.

Future Outlook: AI Chip Competition and Market Share

Who Will Lead the AI Accelerator Race?

While Nvidia remains the dominant player, the re‑entry of the H200 into China intensifies competition among rivals eager to capture a slice of the booming AI‑compute market. IDC’s 2024 forecast shows Nvidia holding 45% of global AI‑accelerator shipments, followed by AMD at 20%, Intel at 15%, Qualcomm at 10%, and other niche players sharing the remaining 10% (IDC, 2024‑03‑05).

AMD’s MI300X, launched in late 2023, targets the same high‑performance inference workloads as the H200. However, analysts at Gartner note that AMD’s ecosystem—particularly its software stack—lags behind Nvidia’s CUDA and cuDNN libraries, making it a less attractive option for Chinese firms that have already built pipelines around Nvidia’s SDKs (Gartner, 2024‑02‑20).

Intel’s Xeon‑based Habana Gaudi accelerators aim at data‑center training rather than inference, positioning them in a complementary niche. Yet Intel’s recent partnership with Baidu to co‑develop AI chips suggests a strategic pivot toward the Chinese market, potentially eroding Nvidia’s foothold if regulatory conditions tighten again (Financial Times, 2024‑01‑30).

The donut chart below visualizes the current AI‑chip market share landscape, highlighting the concentration of power and the modest but growing presence of challengers. For Nvidia, maintaining its lead will depend on continued innovation, robust compliance mechanisms, and the ability to navigate future export‑control revisions.

Looking forward, experts at the Center for a New American Security (CNAS) warn that any future escalation in U.S.–China tensions could trigger a “decoupling cascade,” prompting Chinese firms to accelerate domestic chip development. In that scenario, Nvidia’s H200 could become a transitional technology, buying time for China’s home‑grown solutions to mature.

Nevertheless, the immediate outlook remains bullish for Nvidia. The company’s guidance projects a 12% increase in data‑center revenue for FY 2025, driven largely by H200 sales in China and continued demand from North American cloud providers. As the AI arms race intensifies, the H200’s restart may well be the first of several calibrated steps that define the next era of global semiconductor competition.

Frequently Asked Questions

Q: Why did Nvidia stop exporting H200 chips to China in 2023?

The U.S. Commerce Department placed the H200 on the Entity List in April 2023, citing national‑security concerns over advanced AI hardware reaching the Chinese military, which halted all sales to Chinese customers.

Q: What does the restart of H200 production mean for Nvidia’s earnings?

Analysts at Morgan Stanley estimate the Chinese H200 line could generate roughly $2.1 billion in 2026 revenue, adding about 15% to Nvidia’s AI‑chip earnings growth and easing the impact of earlier export bans.

Q: How will the U.S. government monitor Nvidia’s sales to China after the restart?

The Commerce Department will issue a specific license that requires quarterly reporting of shipment volumes and end‑use verification, a framework first used for the August 2023 limited clearance.

📰 Related Articles

- Nebius Secures Record-Breaking $27 Billion AI Deal to Power Meta’s Expanding Data Center Network

- Waymo Co-CEO Tekedra Mawakana Makes Safety Case for Autonomous Vehicles

- Privacy Outcry Grows as AI Smart Glasses Enter Everyday Life

- Nvidia Powers Mira Murati’s Thinking Machines Lab with Multi‑Billion Chip Investment

📚 Sources & References

- Nvidia Says It Is Restarting Production of AI Chips for Sale in China

- Nvidia restarts H200 AI chip production for China after export license reversal

- U.S. Commerce Department lifts export restrictions on Nvidia’s H200 processor

- Nvidia 2023 Annual Report – Revenue by Segment

- IDC Forecasts Global AI Chip Market Share 2024

- Morgan Stanley notes on Nvidia’s China opportunity