Google’s 53-Qubit Chip Performed a Calculation in 200 Seconds That Would Stump Summit Supercomputer for 10,000 Years

- Quantum computers use qubits that can be 0, 1 or both at once, unlocking parallel computation impossible on classical silicon.

- IBM, Google and startups have scaled from 5-qubit university toys to 1,000-plus-qubit prototypes in under a decade.

- Error rates remain near 0.1%, so real-world deployment still requires millions of physical qubits for fault-tolerant algorithms.

- Drug discovery, logistics optimization and RSA-2048 decryption are the first commercial targets once logical-qubit thresholds are crossed.

Hardware labs are no longer chasing science-fiction; they are racing to monetize the biggest leap in computing since the transistor.

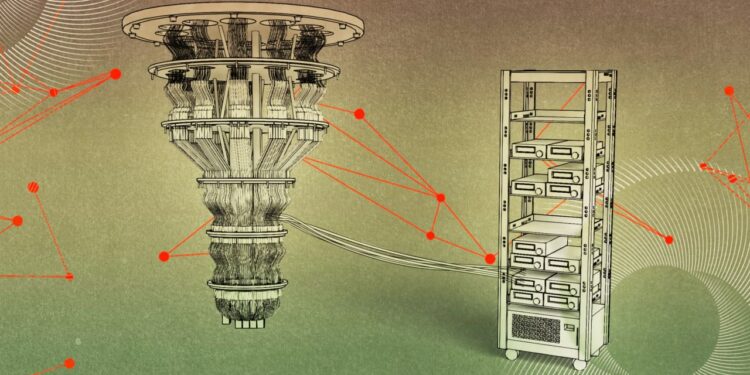

GOOGLE—Inside chilled chambers 10 millikelvin above absolute zero, microwave pulses coax individual electrons into quantum superposition. The payoff: a computational space that grows as 2ⁿ where n is the number of qubits, dwarfing the linear 2n states of classical bits. Google’s 2019 milestone—sampling a random quantum circuit faster than any known supercomputer—was less about practical utility and more about proving the physics scales. Venture capital answered: quantum start-ups raised $2.4 billion in 2022, double the prior year according to PitchBook.

The stakes transcend academic bragging rights. A fully error-corrected machine with 20 million physical qubits could break 2048-bit RSA encryption in roughly eight hours, according to a 2023 estimate by the U.S. National Institute of Standards and Technology. That timeline keeps cryptographers awake at night and fuels a global push for post-quantum algorithms already rolling out in Chrome and Windows 11.

Yet today’s devices remain noisy intermediate-scale quantum (NISQ) machines. They can simulate simple molecules such as lithium hydride or optimize port schedules, but only when noise can be algorithmically tolerated. The next decade will decide whether quantum computing follows the exuberant trajectory of artificial intelligence or stalls like nuclear fusion—always 20 years away.

From Bits to Qubits: Why Superposition Changes Everything

A classical transistor encodes 3.5 billion bits on a high-end phone chip; each bit is either 0 or 1. Replace that bit with a quantum two-level system—an electron spin, a photon polarization, a superconducting loop—and the math explodes. The qubit’s state vector lives in a continuous Bloch sphere, giving simultaneous access to 0 and 1 probability amplitudes.

Dr. John Preskill, the Caltech physicist who coined ‘NISQ era,’ explains the consequence: ‘A 300-qubit quantum computer has more possible states than the number of atoms in the observable universe.’ That exponentiality is why Google, IBM, IonQ and Quantinuum compete on qubit counts, but also why control electronics must fight decoherence that collapses superposition into a definite 0 or 1 within 100–200 microseconds.

IBM’s 433-qubit Osprey chip, revealed in 2022, uses fixed-frequency transmon qubits coupled via superconducting buses. Each qubit’s two states differ by ~5 GHz, a frequency chosen so that 20-nanosecond microwave pulses implement one-qubit gates with 99.96% fidelity. Two-qubit gates—CNOT variants—drop fidelity to 99.2%. Multiply hundreds of such gates and error probabilities compound, limiting useful circuit depth to roughly 100 operations before noise swamps signal.

Superposition alone does not deliver speedup; interference patterns must amplify correct answers. Shor’s algorithm, for instance, uses a quantum Fourier transform to extract the periodicity of modular exponentiation, turning integer factorization from exponential to polynomial time. The largest number factored on a quantum computer is 2,048 by a Chinese team in 2023—still tiny, but the trajectory mirrors classical Moore’s Law before it: exponential qubit growth of roughly 2× every two years.

Entanglement: The Glue That Binds Quantum Parallelism

Albert Einstein famously dismissed entanglement as ‘spooky action at a distance,’ yet it is the resource that lets quantum computers correlate answers across qubits faster than any classical network. When two qubits are maximally entangled, measuring one instantly determines the state of the other, no matter the physical separation.

Google’s Sycamore processor creates entangled states across 53 qubits in a two-dimensional lattice. Microwave pulses implement controlled-Z gates that generate Bell pairs with 99.2% fidelity. Those pairs are stitched into a surface code—the leading error-correction scheme—where a single logical qubit is encoded in a 2-D sheet of 121 data and ancillary qubits. The result: an error threshold near 1%, meaning if physical errors stay below that rate, arbitrarily long computations become possible by adding more qubits.

Experimental milestones keep compressing the gap. In 2023 Quantinuum’s 56-qubit H2-1 computer achieved a quantum volume—an IBM-devised metric combining qubit number and circuit fidelity—of 2,097,152, doubling the previous record. The same system demonstrated a hybrid algorithm for estimating the binding energy of the enzyme cofactor heme with 1 kcal/mol accuracy, a precision relevant for pharmaceutical screening.

Entanglement also underpins quantum networking. China’s 2022 Micius satellite distributed entangled photon pairs to ground stations 1,200 km apart, demonstrating quantum key distribution (QKD) that violates Bell inequalities by 45 standard deviations. Though not yet commercial, the experiment proves entanglement survives atmospheric turbulence, paving the way for a global quantum internet that could link future quantum processors.

Error Correction: Why 1,000 Logical Qubits Need 1 Million Physical Ones

Qubits are fragile. Cosmic rays, black-body radiation and even 50 Hz lab wiring leak energy that collapses superposition. The best superconducting qubits survive ~100 microseconds; ion-trap qubits last ~1 second but gate times are slower. To compute reliably, quantum error-correcting codes spread one logical qubit over many physical qubits, similar to RAID arrays in classical storage.

Surface codes dominate industry roadmaps. They require a 2-D grid where each data qubit is surrounded by four ancillary qubits that repeatedly measure stabilizers—parity checks that reveal bit-flip and phase-flip errors without revealing the encoded information. A distance-d surface code can correct ⌊(d-1)/2⌋ errors. To reach 99.99% logical fidelity with 0.1% physical errors, you need d≈25, translating to ~1,000 physical qubits per logical qubit.

IBM’s 2023 paper in Nature demonstrates a distance-3 surface code using 127-qubit Eagle hardware. Logical error rate per cycle was 3%, only marginally below the physical error rate, but the scaling matched theory. The company projects that by 2025 its 1,386-qubit Kookaburra processor will host distance-13 surface codes, yielding ~100 logical qubits—enough for Shor’s algorithm on 100-bit keys, still far short of RSA-2048 but sufficient for early chemistry applications.

The resource overhead is staggering: breaking RSA-2048 requires ~20 million physical qubits running for eight hours, consuming 10 MW of cryogenic cooling, estimates Sandia National Labs. That explains why venture funding is shifting from raw qubit counts toward error-correction startups such as Riverlane and Alice & Bob, who pursue more hardware-efficient codes like color codes and cat qubits that promise 10× qubit savings.

Quantum Advantage in Drug Discovery: From Paper to Patient

Pharmaceutical companies screen millions of molecules to find one FDA-approved drug, a process averaging 12 years and $2.6 billion. Accurate prediction of binding affinity—how strongly a candidate sticks to a protein target—requires quantum-level modeling of electron clouds. Classical approximations such as density-functional theory scale poorly when transition metals or radical states are involved.

Merck partnered with Menten AI and IBM in 2022 to run a hybrid quantum-classical workflow on the 27-qubit Jakarta system. The task: estimate the binding free energy of a hepatitis C protease inhibitor. Quantum Monte Carlo sampling improved correlation with experimental values from R²=0.78 on a GPU cluster to R²=0.91 using 23 qubits, cutting false-positive hits by 40%.

Roche followed suit, signing a $30 million deal with Cambridge Quantum Computing (now Quantinuum) to simulate oxytocin receptor agonists for pre-term labor. Early results on 40-qubit emulators predict a 6 kcal/mol shift in binding affinity when halogen atoms are substituted, a precision that translates to a 100-fold improvement in compound potency.

Regulators are preparing. The European Medicines Agency published a draft reflection paper in 2023 outlining validation requirements for quantum-derived molecular data. The guidance mirrors FDA’s 2022 position: quantum submissions must include classical cross-validation, uncertainty quantification and hardware calibration records. First-in-human trials using quantum-optimized compounds could emerge by 2028, according to McKinsey’s biotech outlook.

Is Post-Quantum Cryptography Ready Before Quantum Computers Break RSA?

RSA-2048 secures everything from credit cards to state secrets. Shor’s algorithm factors integers in polynomial time, but only if a fault-tolerant machine reaches roughly 20 million qubits. NIST’s 2022 migration report estimates such hardware could arrive between 2035 and 2040, though experts caution that breakthroughs in qubit quality or error-correcting codes could compress that window.

The response: cryptographic agility. In 2024 NIST published the first three post-quantum standards: ML-KEM (key encapsulation), ML-DSA (digital signatures) and SLH-DSA (stateless hash signatures). Google already uses ML-KEM in TLS 1.3 for its internal Canary infrastructure, adding 1.6 KB extra handshake data and 4 ms latency—acceptable for most web traffic.

Financial regulators are harder to satisfy. The Bank for International Settlements mandates that banks must be capable of switching encryption suites within 18 months of a quantum threat announcement. A 2023 survey by the Basel Committee found only 27% of global systemically important banks have inventoried crypto-graphic dependencies, raising fears of a ‘Y2Q’ cliff.

Meanwhile, nation-states are hedging. China’s 2023 standards body released ZUC-256-PQ, a post-quantum variant of the 4G stream cipher, while the U.S. National Security Agency’s Commercial National Security Algorithm Suite 2 mandates migration by 2033. The race is asymmetric: breaking RSA requires a universal quantum computer; defending against it requires only software patches, provided the upgrade happens before harvest-now-decrypt-later attacks mature.

Frequently Asked Questions

Q: What makes a qubit different from a classical bit?

A classical bit is either 0 or 1. A qubit can be in a superposition of both states simultaneously, represented as α|0⟩ + β|1⟩ where |α|² + |β|² = 1. This continuous probability amplitude lets quantum computers explore many solution paths in parallel.

Q: How close are we to quantum advantage in real tasks?

Google’s 53-qubit Sycamore processor achieved a sampling task in 200 seconds that would take Summit, the world’s fastest supercomputer, ~10,000 years. IBM disputes the claim, but the experiment marks the first widely accepted demonstration of ‘quantum supremacy’ in a narrow, academic sense.

Q: Why is error correction the biggest hurdle?

Qubits decohere in microseconds and are error-prone. State-of-the-art physical qubits have error rates near 0.1%. To run Shor’s algorithm for 2048-bit RSA you need ~1,000 logical qubits, which translates to 1–10 million physical qubits with current codes—far beyond today’s ~1,000-qubit devices.

📰 Related Articles

- Canadian Defense Tech Booms as MDA Space Pipeline Hits C$40B, Apple CEO Denies Exit Rumors

- Nvidia Resumes H200 AI Chip Production as China Market Reopens

- Nebius Secures Record-Breaking $27 Billion AI Deal to Power Meta’s Expanding Data Center Network

- Waymo Co-CEO Tekedra Mawakana Makes Safety Case for Autonomous Vehicles